Rapid and accurate data capture is crucial to collaborative design, construction, and operational ecosystems. The solutions for capture have greatly improved, and so have the mechanisms for bringing the resultant models into the process.

“You need to build that single source of truth that everybody can trust to use for different workflows, different decision-making processes. [You can use it] to collaborate and to contribute to creating and curating a digital twin.” – Pascal Liens Martinez, director, strategic partnerships, Bentley Systems.

A digital twin for infrastructure design, construction, and operation carries elements of the real world, deftly meshed with the virtual. The geometry and attributes of the physical can include high-fidelity 3D geometry, attributes, and increasingly, real-time sensor data—IoT. Suites of design software, project management, and sensor input systems have matured to include elements of AI, rules-based design, simulation, and synchronization throughout the infrastructure lifecycle—and in cloud-based collaborative environments. As have the solutions to capture reality data: LiDAR, imaging, and remote sensing, via mobile, airborne, and even apps on phones and tablets. The solutions to capture data have become more precise, less expensive, and easier to operate; very smart and able to reduce or eliminate many sources of error.

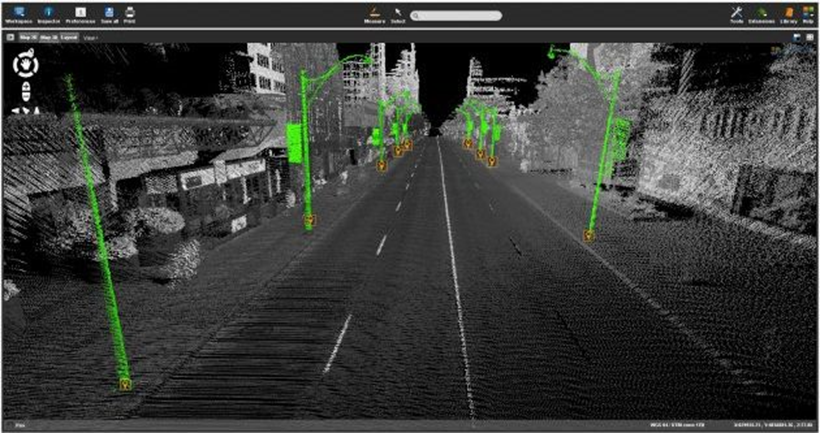

Capture therefore, might be the easy part; but what of the crucial steps of processing and injection into digital twins? I recently spoke with Pascal Liens Martinez, Director, Strategic Partnerships at Bentley Systems about how these steps are managed in their digital twin ecosystem. The main application for these steps is ContextCapture, which generates 3D reality mesh models from any combination of sources that can include images and LiDAR point clouds. Using images and LiDAR from static scanners, mobile platforms, backpacks, and handheld units is common for initial pre-design modeling, as-built, and compliance surveys. However, with the rapidly improving sensors on phones and tablets, these are proving to be powerful solutions that can inject geometry into digital twins at many more points in the overall process.

Announced in November of 2022 at the annual Year in Infrastructure and Going Digital Awards event was iTwin Capture. As the press release issued at the event describes: “For capturing, analyzing, and sharing reality data, [it] enables users to easily create engineering-ready, high resolution 3D models of infrastructure assets using drone video and survey imagery from any digital camera, scanner, or mobile mapping device. Infrastructure digital twins of any existing assets can accordingly start with reality modeling, rather than requiring a BIM model. iTwin Capture offers a highest-fidelity, and versatile means of capturing reality to serve as the digital context for surveying, design, monitoring, and inspection processes.” iTwin Capture is one of several new applications in a software ecosystem that Bentley calls iTwin technology.

This includes a mobile app that leverages the cameras on phones and tablets. I remember using the ContextCapture app to create 3D models years ago, and the process has only improved.

Painting Reality

“The purpose of what we are doing on top of phones and tablets is basically to help users capture the right thing,” said Martinez. “To get an initial coarse representation of the object or environment, we are capturing image frames on the fly. And we can use images or video footage shot in parallel to LiDAR acquisition. We capture the photo, then another, and so on… we paint the global environment and give visual feedback to the operator to know what they have captured, and the quality—when everything is green, you’ve got all the frames you need.”

As processes have become highly automated, it all works well when the data is right, but when the data is not right, the model can be broken, and so can the workflow. Ensuring that the data is right is a high priority in Bentley’s development of such applications. There has been a lot of buzz around LiDAR/camera integrations in, for instance, iPhones and iPads, with some promising, albeit limited range and limited precision implementations, for AEC applications. Martinez explained that they are very carefully examining how, and if, such new applications could fit into their data capture strategy.

“You could do it, but it depends on the quantity of the point cloud,” said Martinez. “If you’re using the point cloud that’s acquired by the device now, it’s not containing a lot of points. It’s okay for a coarse reconstruction, but only at a very short range of a few meters. If you mesh it and you texture it, you may get a rough sense of what the scene is, and that would be okay. There could be many use cases, but not if you want to get to engineering grade kind of applications.” He said they are actively evaluating such options.

While LiDAR, done with conventional, static, large format scanners can yield dense point clouds, even at long ranges, this can be costly and time consuming. Mobile, handheld SLAM and backpack systems have brought a lot more flexibility. Conventional LiDAR may be the best option for the upfront pre-design survey. However, to find the sweet spot for the many instances in the lifecycle of the infrastructure that could benefit from rapid, topical capture, they may not. “You cannot extract more details from what is captured by the by the LiDAR unless you use a different type of input,” said Martinez. “And that’s one of the reasons why we are capturing photos and not relying only on the point cloud—to capture a real geometry with this you need a higher density. We capture frames from the images, which provide information pixel by pixel. That’s already more information than the LiDAR points.”

Deciding which application to use depends on the use case. If you’re interested in capturing a room just to get the right dimensions, or to capture the furniture for, say, a real estate application, or are looking at the condition of the walls; it might be okay to use a point cloud and turn this into a mesh and texture the mash from the photos you’re capturing at the same time. Still, you need to capture the images. In many instances, you could achieve the same results using only the images, so why add the overhead of LiDAR? You can drop the images into the 3D model to texturize the mesh.

“If you want to extract higher precision [images], that is where we rely more on the photogrammetric approach, where we just use the point cloud to help the with the completion of the acquisition to make sure that’s working,” said Martinez. The LiDAR can provide a check for the image-based model, however, the richness of the matched pixels in successive frames will yield higher density and precision at certain ranges. People often picture (no pun intended) that LiDAR is inherently far superior to photogrammetry in all instances. Yes, in some instances, but mostly not. I remember meeting the survey team at CERN, and they explained how they did alignments of the enormous experiment modules for the Large Hadron Collider, when they are moved back into place after maintenance. Did they use conventional surveying instruments or LiDAR? No, they used photogrammetric methods (using a high-quality DSLR).

Integration into a Digital Twin

Bentley’s ContextCapture enables users to create the model and mesh, analyze, import interoperable formats, measure, and touch up. It can take in many types of inputs, from cameras, standard format data from other sources, LiDAR point clouds, etc., and create 3D models. There is also a cloud-processing option. These models are ready for ingestion into their design packages, like OpenRoads, MicroStation, OpenBuildings, and many others. And through Bentley’s iTwin technology the models can be utilized in a collaborative design/construction/operation environment. And this can be done in the cloud.

The word “cloud,” though, can evoke fears of single points of failure. The harsh reality is that even in the time of 5G, no connection is immune to instability. So, when you have a giant project with many teams, like design teams from civil to MEP, project and construction management, owners, and stakeholders, the idea of a single giant model out in the cloud seems like it could be a disaster waiting to happen. However, there could be just as much potential mayhem if each team kept a copy of the model, and then there is the issue of version control.

The Bentley approach to a distributed environment, that is coordinated via the cloud, was announced about five years ago. Based on their iModel technology (think of a very smart BIM model), each team could have copies of the model, and even work in them offline. And when a team makes a change or needs to ingest the changes other teams have made, all that they have to transact in the cloud are those changes. The process is dealing purely with those transactions—no giant model to choke on when syncing.

This is especially beneficial for design change orders, incremental construction as-builts, and for adding/updating elements of the model geometry via iTwin Capture. For instance, some ducts or pipes are installed; a quick capture provides a way to see how this lines up with the design model. The model can then be updated, and whole team sees this. Perhaps an unanticipated clash is discovered; a change order can be created and managed through Bentley’s ProjectWise (a project delivery suite), and construction simulation and scheduling can be done in SYNCHRO. This is not an endorsement of these products, but, instead, a good example of a large umbrella of solutions that can work together in an infrastructure collaborative environment. There are a lot of great products out there for different AEC needs.

Avoiding rework up front is a prime goal. Martinez said that it may represent as much as 30% of construction costs globally. Such sobering numbers provide the impetus for BIM implementation, digital twins and infrastructure collaborative environments. Are terms “BIM” and “digital twin” a bit overworked? Not really, they describe in many ways what infrastructure design, construction, and operations did in years, decades, and centuries past—but with paper in those days. We can’t update the world’s infrastructure using paper plans alone, and Excel spreadsheets (though some still try).

“The digital twin is partially designed, partially reality,” said Martinez. “Partially IT, data and metadata on top of that. That needs to enable the collaboration between the different stakeholders, whether they are in the same organization or from multiple organizations. To visualize the same thing or visualize what they need from the digital twin to make informed decisions, and make sure that the single source of truth, something that they can believe, is reflecting reality.”

If the BIM model or digital twin is high fidelity, current, and complete, it can enable higher productivity, in no small part by reducing or eliminating common “project killers.” “Real progress monitoring or defect detection, examining inaccuracy on the reconstruction, model, do clash detection or accurate measurements to make sure the structural elements are in the right place, that a pipe is in the right place, and so forth,” said Martinez. “Then you’ll need precision, and precision is coming through a different type of acquisition that’s requiring more accurate and more comprehensive methods and devices.” Like more points, and this may be static LiDAR, but perhaps not as often as in the past.

Cameras in phones are becoming as high resolution and quality as yesterday’s DSLRs. Not to mention some astounding new 12K modular cameras for mobile and backpack integration. No matter if the source is photogrammetry-based capture or point clouds from LiDAR, the injection process, in the Bentley ecosystem, is still ContextCapture (and now iTwin Capture).

Visualizing the Future that Is Already Here

Another case for rapid, precise, and easily integrated capture in the collaborative environment is for visualization, virtualization, and simulation. We are starting to see real-world applications of mixed reality (MR, XR) in design and construction. For instance, an interactive demonstration at the 2019 Mobile World Conference of a building design in SYNCHRO using the Microsoft HoloLens XR headset.

Fast-forward to the 2022 Year in Infrastructure and Going Digital Awards event, where iTwin Experience was announced, and Bentley demonstrated iTwin Experience. The press release issued at the event describes it as “a new cloud product to empower owner-operators’ and their constituents’ insights into critical infrastructure by visualizing and navigating digital twins. Significantly, iTwin Experience accelerates engineering firms’ “digital integrator” initiatives to create and curate asset-specific digital twins, incorporating their proprietary machine learning, analytics, and asset performance algorithms. iTwin Experience acts as a “single pane of glass,” overlaying engineering technology (ET), operations technology (OT), and information technology (IT) to enable users to visualize, query, and analyze infrastructure digital twins in their full context, at any level of granularity, at any scale, all geo-coordinated and fully searchable.”

The demonstration at the 2022 Year in Infrastructure and Going Digital Awards event was impressive. There was both a headset and wide screen utilized together in an immersive look at several real-world projects; one of which was of construction inside the main building of the ITER fusion reactor in progress in the south of France. While visually impressive, the potential to help boost project collaboration could translate to substantial gains in productivity—especially for avoiding work disruptions and rework. For example, someone on site could capture an installed feature photogrammetrically on their tablet, and it could be updated in the live model while the whole team is engaged.

That kind of confluence of innovation and advancements in applications and solutions for infrastructure design, construction, and operations (as Lebowski said) sort of “ties the room together.” Cameras are better, LiDAR is getting more affordable and portable, IoT is bringing in live sensor data, AI is aiding modeling, design, and simulations, and there are broad and powerful infrastructure collaborative suites. The pieces are all in place… now we have to get on with tackling the global infrastructure gap.

Be the first to comment