Respective advantages and disadvantages are conditional. Knowing what those conditions are is the key to leveraging one or the other—or both—to optimize results and productivity for specific types of work.

The answer to this question, posed to surveyors and other geospatial practitioners, is often “it depends.” The answer is often conditional, and the very reason why surveyors and other skilled practitioners are, or should be, sought to do high-precision work.

This is a long read, but the subject is not simple. If you are going to make decisions, potentially costly decisions, about what to use, what workflows and approaches, then you need to endure a few long reads.

Any time there is a discussion about “RTK vs. RTN,” you will hear strong views. The inevitable “VRS sucks!” does nothing to further the discussion. Note that VRS is often used as a generic term for network RTK (NRTK) and RTN, but there are various approaches to what is called “VRS”, and other NRTK approaches like “master-auxiliary”, FKP, and more, that all yield similar results. But more on that later.

Any time there is a discussion about “RTK vs. RTN,” you will hear strong views. The inevitable “VRS sucks!” does nothing to further the discussion. Note that VRS is often used as a generic term for network RTK (NRTK) and RTN, but there are various approaches to what is called “VRS”, and other NRTK approaches like “master-auxiliary”, FKP, and more, that all yield similar results. But more on that later.

There’s a lot of history and nuance behind how various views on the matter formed. The addition of multiple constellations and not-insignificant advances in the science of GNSS and supporting resources have changed the proposition. However, for the most part, skilled practitioners have, through diligent and thoughtful testing, come to reasoned conclusions as to when one or the other should be used, when they need to meet error budgets, cost-effectiveness considerations, and productivity goals.

Concerning though, is the massive influx of new users, particularly for drone operations. Many are completely new to the world of high-precision GNSS corrections, post-processing, and concepts of geodesy and the nuances of accuracy and precision (as would relate to all of the above). One particularly disturbing misconception is that real-time eliminates the need for ground control points (GCP) for all projects. This is not a slam on drone users; most do have a handle on the subject or are more than willing to ask for advice. Unfortunately, a lot of the advice is fraught with misconceptions that may have formed from decades of past experiences (from long ago) or anecdotes. Vendors can be quite helpful but are sometimes not well-versed in these matters. The drone and software can be amazing, but the nuances of GNSS, geodesy, etc., are not necessarily their forte. More on the question as it relates specifically to drone operations later…

So, which is better and when? There’s a lot unpack….

The Spectrum

Differentially correcting GPS, and now GNSS, has been the standard for deriving high-precision positions out of observations. Double differencing, single differencing, accounting for sources of error like ionospheric and tropospheric states, corrections for clocks and orbits, different code biases, and even correcting for ocean-tide loading on coastal plates (no kidding) can now be in the mix.

The evolution of real-time began when on-the-fly initialization could be achieved; something that had otherwise been limited to static sessions and post-processing (though post-processed kinematics can be the right choice for certain drone operations).

The evolution of real-time began when on-the-fly initialization could be achieved; something that had otherwise been limited to static sessions and post-processing (though post-processed kinematics can be the right choice for certain drone operations).

There’s a wide range of methods that can yield high and not-so-high precision results. Each can be the right fit for different needs: surveying, construction, resource mapping etc. It depends on your error budget cost-productivity goals. The element of “fit for purpose”.

There are great “resource grade” correction services, in ranges from sub-meter to sub-foot, to deciemter. The higher the expected (or claimed) precision, the more complex , and therefore understandably expensive, the correction methods or service will be. While there is a new wave of global real-time networks that tout “centimeter anywhere”, this is often stated to easily impress those who do not understand the nature of spatial precision and accuracy. And how it plays out in real-world scenarios should come with a lot of qualifiers.

Precise Point Positioning (PPP) services (e.g., clock, orbits, and regional iono model products delivered via the web, or communications satellites) are on the rise and provide amazing results, suitable for many applications. But, as they have not yet achieved the tight results of RTK and RTN (in both horizontal and vertical precision), I’m leaving PPP out of this discussion about RTK and NTRK. PPP is, though, something you should try as it might meet some of your needs. I digress…

Otherwise, the choices for the highest precision GNSS for field ops boil down to traditional real time kinematic (RTK), and network RTK (NRTK/RTN).

Fishing in A Rain Barrel… Mostly…

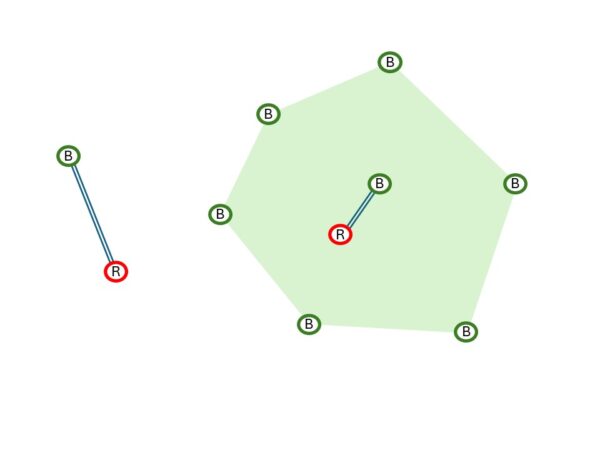

Under optimal conditions, base-rover RTK cannot be beat. Absolutely… but there are always caveats. Now we need to unpack “optimal”. If you set up a “site base”, under a kilometer from where you’ll be running your rover (or drone), you can expect incredibly tight results. It’s like fishing in a stocked rain barrel—nearly guaranteed success. Og course, you might need to have someone keep an eye on it, establish the reference position. A site base can collect static for post-processed kinematic (PPK). Combined with GCPs, this is a powerful combination for drone ops. RTK and PPK are fundamentally doing the same thing, with the latter simply being done after the fact. There are advantages with PPK (in this situation) for processing with IMU data in a “forward-back-forward again” manner. And you can wait to use precise orbits, etc. It would take a separate article to go over the nuances of PPK.

You have super tight results from your site base, if you’ve set it up in a clear sky location, on a stable mount, clear of multi-path and attenuation from vegetation, and you’ve worked out the geodesy, or set it up on project control–if it does not get stolen, knocked over, etc.

Now start moving further from the base, say out to 10km. On a low space-weather activity day, and if the tropospheric (weather) conditions are similar to those at the base, you are still going to get amazing results. An old rule-of-thumb for single-base RTK is that (for systems that do what was called “iono-free” style solutions), after 10km (in normal conditions) the sources of error begin to add up—there is deterioration over distance from the base. It is quite possible to get great results out further: to 20km, 30km, and some have managed to go further. Never try this, but for fun, we’ve gotten fixes from Seattle to Spokane and Portland. Hundreds of km, but you should NEVER use such results.

On any given day, base-rover, and to some degree, network, can yield different results. Especially considering space weather (iono) conditions, and to a lesser degree, local tropospheric conditions. Different baseline lengths can yield varied results, and there’s a particular potential hazard of mixing different baseline lengths.

A lot of folks’ views on RTK vs RTN were formed in the days when there was one constellation, only two signals, and RTN had not evolved to anywhere near what they can deliver today. To some degree, base-rover RTK has also evolved, but the gap between RTK and RTN has all-but closed, depending on the conditions and approaches used.

Many RTN offer not only network solutions, but also single-base solutions from each reference station, sometimes in different flavors of correction. The different flavors of correction evolved mainly as compression approaches improved and multiple constellations were added. Some RTN offer multiple correction formats, mainly to support older rovers. A common modern style is RCTM3.x MSM (multi-signal message), delivered for both base-rover RTK and network solutions. There are some proprietary correction types, with improved compression, and certain other included message types suitable for specialized applications. But in general, there are few differences in results, beyond that more sats and signals bring.

Both RTK and RTN employ some of the same fundamentals to correct for sources of error: application of clocks and orbits, differentially modeled iono and tropo delays. Note that a base and rover are limited to “broadcast” clock and orbit. RTN can apply more refined models. Base-rover is dependent on the specific conditions between the base and rover, along that one, singular path. RTN leverages and models the from groups of bases, all around your location. But more on this later.

So, if you have a modernized base and rover, and a modernized RTN, delivering the same corrections format, then you could do true apple-apple comparisons at different distances from bases. However, it’s more like apple-banana, there’s more nuance to comparisons.

What’s Productivity Got to Do with It?

Why was network RTK invented if base-rover is better? First, define better, and under what circumstances. Back in the mid-1990s, when RTK was first developed, folks already envisioned networks of bases so that field users could simply fire up a rover and start shooting. Nice vision, but impracticalities arose. They’d have to be spaced 10km apart for optimal single-base results (under most conditions). The need for base radios for each is now moot with NTRIP, cell comms, and TCP/IP options, but at the time, that would have represented added cost, and most base radios could not reach the full 10km range anyhow.

By stepping back from the base-rover model and its limiting tether, clever R&D folks looked to wider area modeling, leveraging multiple bases. And, through careful testing, determined that bases could be spaced 30km, 50km, and even 70km or more apart (depending on local conditions). This spawned the development of RTN all over the globe, some encompassing entire countries. This enabled many more applications than just for surveying. The boom in RTN use grew in other sectors, like asset mapping.

However, early RTN were hobbled by a single constellation, only two signals, rough clock and orbit resources—early implementations were nowhere near as good as the present day. So, in a way, they might have “sucked”. Still, the productivity gains of being able to step out of the rig, and fire up the rover were obvious, for appropriate work with realistic expectations. RTN also provided a precise and consistent geodetic reference. This has become even more important as national geodetic entities around the world are relying more on active control (CORS) and less on legacy passive marks.

A lot of users expressed doubt about RTN, citing “too much of a black box”. Truthfully, there’s a lot of black box in the technology of RTK as well. This is where understanding the fundamentals of how they work, testing, and experience count. A persistent misconception about RTN is that it uses a “virtual” base position. This matter was muddied by competing marketing stances in the early days of RTN. “Virtual” is true of the modeling used to create corrections, but there’s still a hard link to a real base, in master-auxiliary, VRS, and other NTRK approaches. For example, in VRS style corrections, there is a message called PBS (physical base station) or PRS (physical reference station). The modeling of iono and tropo is derived for the rover location, from multiple bases, but the hard reference coordinates come from real bases. In many types of rover software, you can export vectors from the rover to the PBS/PRS. Additionally, there have been extensive tests commissioned by large geomatics entities, like the Ordnance Survey of the UK, including different NTRK solutions, and they found little, or no difference between them.

The fact that RTN are connected to the net by default and run on powerful servers that can do much more heavy lifting than a rover, enables the implementation of significant technological advances. As mentioned earlier, rovers are limited to “broadcast” clocks and orbits. Those are among the simplest types of clock and orbit products. RTN can bring in refined products, like “ultra-rapid orbits”, directly via the web. For traditional post-processing, it was often standard to wait days, or even a week, for “precise” orbits. You should try this: if you post-process with precise and ultra-rapid orbits, you may see little or no difference. Key to this is that some manufacturers of RTN software have global tracking networks with individually modeled receivers and antennas, that produce clock and orbit products (for all constellations) that are at least as good as, and sometimes better than, those from public sources. Their motivation to do this is mainly to support their PPP services, but RTN are a downstream beneficiary.

The fact that RTN are connected to the net by default and run on powerful servers that can do much more heavy lifting than a rover, enables the implementation of significant technological advances. As mentioned earlier, rovers are limited to “broadcast” clocks and orbits. Those are among the simplest types of clock and orbit products. RTN can bring in refined products, like “ultra-rapid orbits”, directly via the web. For traditional post-processing, it was often standard to wait days, or even a week, for “precise” orbits. You should try this: if you post-process with precise and ultra-rapid orbits, you may see little or no difference. Key to this is that some manufacturers of RTN software have global tracking networks with individually modeled receivers and antennas, that produce clock and orbit products (for all constellations) that are at least as good as, and sometimes better than, those from public sources. Their motivation to do this is mainly to support their PPP services, but RTN are a downstream beneficiary.

All of that server-side processing means that a continuing succession of algorithmic advances benefits RTN. With 4-5 constellations having as many as 23 signals between them (particularly since BeiDou and Galileo reached full complement in 2020), it would not be fair to compare modernized RTN with legacy RTN.

Not all base-and rover setups are the same (and more on that later) the same with RTN. Who is operating them, what their experience is, how they manage geodesy, and more, can make a difference. Though most modernized RTN software makes all of this easier to do. Some have more resources than others and are able to maintain optimal spacing. Often, it is decided to put stations closer together, around areas of most usage, for redundancy if nothing else. In regions of little relief and more consistency in tropospheric conditions, bases can be further apart. There is a persistent misconception that having a base on top of a mountain means better coverage. No, it is not like a radio. It is simply receiving observations from the satellites, and clear sky is the driver, not elevation. In fact, if the base is on a mountain, and you’re working down in the flatlands, the tropospheric differential may be tainting results.

Many RTN have become default extensions of national spatial reference systems. And many serve the scientific community—both demand the highest quality. Quality and stability of mounts, geodetic determinations, maintaining tight network integrity, and tools to maintain these essential elements.

Some RTN have the resources to keep up with developments in constellations, newer signals, and newer processing strategies. For example, some RTN augment modeling with PPP results as a check on reference position velocities or apply individually developed code bias calibrations for every base/antenna pair. A slight detour on the subject of signals: The U.S. NAVSTAR (GPS) constellation is nearing completion of the addition of L5. L5 is one of many modernized signals that represent advantages on several fronts. I remember quite a few folks who said they were not going to buy new rovers until the L5 rollout was complete. Well, while L5 was being built out, newer constellations came along that already added 3rd signals, and sometimes 4th, 5th, and 6th. Third signals processing (and more) has brought a noticeable boost (if your rover supports this).

There’s been a wave of new corrections services, with more added all the time, but they are not necessarily equal. Some were designed for resource-grade or vehicular autonomy applications. While most bring multi-constellation capabilities, they may only support 2 signals per constellation. To achieve the best results, an RTN has to navigate a complex path to apply all the newest and best advances. Cutting corners might not deliver the best results.

Hadware and Software Quality Matters

In optimal conditions, someone can still use a legacy, GPS-only rover and get adequate results. Modern rovers, bases, and RTN, however, can bring new levels of consistency and reliability. But the new magic does not often come cheaply.

There’s a lot going on under the hood of modernized RTN, and rovers. There are some long-held misconceptions about how bases, rovers, and RTN work. Looking under the hood of a typical rover reveals a number of key things. One is that there are engines that handle observations, and other engines that do the positioning magic. The benefits of advanced algorithms in positioning engines are not insignificant. While the secret of RTK is out of the bag, and lower-cost rovers abound, the advantages of higher-end rovers need to be considered (though some of the new wave of mid-range rovers are sneaking up on their pricier cousins).

It is possible to buy a super-affordable rover, or buy a GNSS board and roll your own, and (under the right conditions) get good results. Similarly, one could buy low-cost bases and create a shake-and-bake (single base style) RTN. Network corrections are much more complex. Compromises are not without consequence.

Like the old Moody Blues album title, it’s a “question of balance”. You need to balance error budgets, productivity, and cost. Valid cases can be made for low, mid, and high-end bases, rovers, and networks. It all depends…

Like the old Moody Blues album title, it’s a “question of balance”. You need to balance error budgets, productivity, and cost. Valid cases can be made for low, mid, and high-end bases, rovers, and networks. It all depends…

Let’s consider this in terms of the wave of drone use. Depending on the goals and desired deliverables You need to make carefully thought-out choices. Different workflows and technologies applied could range from “fly it and let the software process the images (or lidar) into an image or model that has sufficient relative integrity of some applications. Or, on the other end of the scale, a well-constrained deliverable that fits real-world coordinates. There’s a lot of GCP avoidance, perhaps too much. No matter what approach you are taking, at least some GCP should be included.

The temptation of real-time-only might work for a few applications but could turn others into inadvertent fiascos. Here are several common approaches:

If one sets up a base on the site, this can log observations for short baseline PPK. Combined with GCPs, this is a powerful approach, and it is a standard for many mobile mapping projects. Or, if there is a permanent base from an RTN in the immediate vicinity, this can work well. A lot of RTN also provide “virtual rinex” that can also be used for PPK.

There are drone operators who tend to get a little cavalier about positioning, lured by the ease of simply going RTK-only, and (gasp) eschewing GCP altogether. Not cool. Very short baseline RTK, or even NTRK can work well for many applications, but not without some mechanism for checking and further reconciling results, namely GCP.

KWYD

In a recent exchange on a surveying forum, about the merits of RTK and NRTK, one of the comments was “it helps if you know what you are doing”. This was not a slight, but good advice. Knowing how this labyrinth of positioning solutions and options works, plus experience, and your own testing, is essential for making the best decisions about what to use, and how to use it.

There is a concept and term, in the competitive gaming community: KWYD, “know what you are doing”. Teams choose members that they can count on to handle specific scenarios. That concept holds true for real-time GNSS operations.

The answer to the question of which is “better”, RTK or NTRK is somewhat nebulous and very conditional. Many surveying outfits maintain both base and rover capabilities, and RTN capabilities. They can benefit from the best of both worlds, depending on what they plan to do.

Beep on!

Be the first to comment