Geoffrey Hinton: “If we don’t have AI regulations, we’re going to be in terrible trouble.”

Toronto-based Nobel laureate Geoffrey Hinton, widely regarded as the godfather of artificial intelligence, clearly stated this at the CIC National 3rd Annual Graham Lecture on International Affairs this month.

We all agree that AI development is progressing at an exponential and boundless pace. It requires some form of guardrails to guide its direction.

Professionals want to include AI on their resumes. Industries want AI to be an integral part of their organizations. Meanwhile, bad actors seek to exploit every aspect of AI for their own short-term gain.

The misuse of AI to undermine democracy, deceive humanity into believing in events that never occurred, and deploy AI in the military to predict scenarios are a few cases that, if handled without proper regulations, are a recipe for disaster.

Could this lead to human extinction? Or have we even considered coexisting with an entity more intelligent than ourselves?

Why do we need regulations and policy?

The advancement of AI beyond human intelligence is often viewed as inevitable, though its timeline remains uncertain.

In the meantime, establishing thoughtful laws, regulations, and policies is crucial to minimizing the risks associated with AI misuse. While corporations frequently promote AI as a tool for social good and increased productivity, this narrative remains a subject of ongoing debate.

At the same time, poorly designed government regulations can create barriers that hinder innovation and slow technological progress. Striking the right balance is therefore essential: policies must be robust enough to ensure accountability and prevent harm, yet flexible enough to support continued advancement.

Without effective oversight, the unchecked use of AI could result in serious consequences, including the amplification of misinformation and its widespread societal impact.

Machine learning can incorporate approaches such as Evolutionary Machine Learning (EML), where models iteratively improve by adapting to new data and increasingly complex problems. Through this process, systems effectively “evolve,” refining their internal structures—such as neural networks—to enhance performance over time.

As AI systems advance through these stages of capability, laws and regulations must also evolve in parallel. A dynamic regulatory framework is essential—one that can adapt to the changing nature, complexity, and potential impact of intelligent systems, ensuring appropriate oversight at each stage of their development.

Upcoming generations need to be taught and ready for the potential of machines that equal or surpass human intelligence. This involves promoting not just technical skills but also ethical understanding, critical analysis, and awareness of AI’s effects on society.

Additionally, creators and implementers of AI systems must be responsible for the ways these technologies are utilized—especially when they result in harm or are used destructively. Setting clear accountability and ethical guidelines is crucial to making sure AI advances serve society positively while reducing possible dangers.

Building a Trusted AI Verification Hub in Canada

Canada is well positioned to become a global leader in AI safety, auditing, and verification. As an early center for artificial intelligence research, the country can build on its history by becoming a trusted place where advanced AI systems are thoroughly tested, certified, and aligned with ethical principles before being deployed.

With its proposed regulatory framework, the Artificial Intelligence and Data Act (AIDA), Canada already has a basis for overseeing high-impact AI systems. By continuously improving and adapting this framework, Canada can establish clear and enforceable standards that encourage transparency, accountability, and safety—while preventing the development or misuse of AI for harmful or destructive purposes.

For instance, Japan is making significant investments in ethics-driven AI rooted in its cultural values. The Japanese government is prioritizing strategies that emphasize fairness, transparency, explainability, and accountability. Leading institutions such as the University of Tokyo and Kyoto University have established dedicated centers for AI ethics and governance, working closely with industry partners to examine the broader societal impacts of AI. Insights from this research inform the development of ethical guidelines and policy frameworks.

In addition, public engagement through workshops, awareness campaigns, and forums plays a crucial role in ensuring that AI systems align with societal needs and expectations.

This approach would foster innovation while ensuring that AI systems are assessed with consideration for the public good, local requirements, and societal advantages.

Future for Geospatial and AI

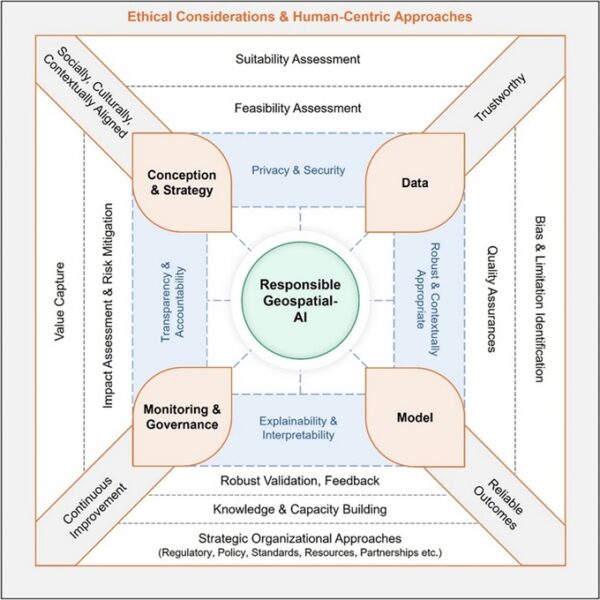

Geospatial data underpins nearly every modern system—from navigation and logistics to infrastructure planning and environmental monitoring. Location intelligence has become a foundational layer across industries, where precision, timeliness, and context are essential for effective decision-making. The process of selecting reliable inputs—imagery, sensor data, and contextual information—and transforming them into accurate outputs is no longer purely technical; it is a matter of trust.

This is where agentic GIS comes into focus. Agentic GIS systems, powered by autonomous or semi-autonomous AI agents, can dynamically gather, interpret, and act on geospatial data.

To address this, agentic GIS models must be designed with built-in safeguards—mechanisms for source validation, bias detection, and explainability. Just as importantly, their integration into organizational workflows should be gradual and strategic. Human oversight must remain central, with validation and authorization processes required for high-impact decisions. Rather than replacing human expertise, these systems should augment it.

As Canada’s infrastructure priorities increasingly reflect sovereign imperatives—resilience, sustainability, and national security—the strategic integration of GIS and ethical AI becomes not just an opportunity, but a necessity.

Canada is well-positioned to demonstrate that these goals are complementary, not competing. By anchoring ethical geospatial AI within robust governance frameworks and human-centered design principles, Canada can help define a global standard—one where advanced capability and institutional accountability advance together.

Be the first to comment