It wasn’t so much just a decade of cool new shiny equipment and software—there’s been much more going on in the background. The “coolest new gear” is often just the visible fruit of deeper innovation in foundational technologies.

It is always fun to highlight cool gear—especially those that made someone exclaim, “Whoa, that’s clever!” There’s lots of cool new gear and solutions out there, but I’m highlighting some that I feel are harbingers of things to come. In many ways, it might have been the most impactful decade for geomatics technologies. I could have done this as a click-bait “listicle” like “10 Best Geomatics Tech in 10 Years”… but I’ll try to curb my enthusiasm (while I drag you into a geo-geek vortex).

I’m sure I’ll be soundly trashed for leaving a few things out. “What about drones?” Well, by 2015, drones were already on a trajectory to become essential kit for surveying and mapping. Platforms have become (essentially) commoditized, with the real developments since coming in sensing payloads. And if I left out your product, that was not intentional; can’t list them all. I wanted to highlight a few examples of how certain underlying tech advances have been integrated.

That all being said, here is a (sort of): GoGeomatics, “Whoa, That’s Clever” (WTC) Awards for 2017–2026. They are not in any particular order, and I’ve listed three foundational technologies last.

Freedom For the Tyranny of the Bubble

In 2018, the first no-calibration tilt-compensation GNSS rovers hit the mainstream. While appetites had been whetted by some early magnetic-field-calibrated tilt-compensation systems, those left a lot to be desired and left many skeptical of any tilt compensation. The new approach to tilt, by comparison, is stellar.

Folks tried to shake and break the new no-calibration tilt systems (me included) but discovered they could match or best, bubble-centric operations (c’mon, chill!). It seems counterintuitive to expect positional stability through motion, but that is just what such systems do: process GNSS observed movement combined with inertial sensors (IMU). Once folks got over initial and understandable skepticism, improved field efficiencies became obvious, especially for topo and stakeout. Instead of move-then-level (and hope it stays plumb while you store the point), with the new tilt, you just point and store. This innovation is now standard on nearly all GNSS rovers released since.

The use of multi-sensor stacks for positional stabilization is not limited to GNSS rovers. Stacking has been adopted for other standard kit, such as drones, mobile mapping systems, and just about any new geomatics field-capture device. You’ll see variations on this type of innovation repeated in many of the following examples.

Image-Based Terrestrial Reality Capture Steps Up

Creating point clouds from images is certainly nothing new. However, relying on images alone has, arguably, been viewed as inferior to large-format terrestrial lidar scanners. A few short years ago, a relatively new firm with roots in creating camera systems for autonomy, took a good long look at what could up the game for terrestrial image-based reality capture.

Areas ripe for improvement include better cameras, individual calibration of those cameras, GNSS+IMU positioning (again), streamlining the workflow for processing: point-cloud creation and classification, with feature recognition in development. The resultant system is a handheld capture device, very simple to use, with cloud-based processing. The results can match conventional scanning over short ranges (i.e., under 30 m) and even can provide more points. This very clever solution has been rapidly adopted, by utilities in particular, and by surveyors (surprise!).

Digital Twins For Infrastructure Development and Operations

Digital twins began adoption in the industrial and manufacturing sectors decades ago. Adopting them for infrastructure has been a much more complex proposition, as each project can be quite unique. Infrastructure and AEC have been well-served (mostly) by design and CAD software, but the transition to full 3D has not yet reached the hoped-for nirvana. Digital Twins became the new buzz term: full, interactive 3D, with rich data attached, and (eventually) in real time. There was great enthusiasm for the dream of a “continuous representation of reality” for planning, design, construction, and operation of infrastructure. The challenge has been in how to get there…

The term “digital twin” tends to get overworked. There are folks who` think that scanning something and making a 3D mesh out of the point cloud alone makes it a digital twin. *cough* To truly realize the value of a digital twin, there is much more to it: currency, spatial integrity, data completeness, and collaboration mechanisms. While not alone in bringing digital-twin solutions to the infrastructure sector, Bentley Systems’ iTwin technology checks nearly every box, and its digital-twin solutions are applied to real-world projects, large and small.

Folks have envisioned a digital twin as some giant central model up in the cloud; that can be quite impractical for collaboration between multiple project teams and represent a single point of failure. Bentley was an early developer of a different approach. A foundational element was Bentley’s 3D iModel, with its accommodation of transactional updates. There can be multiple copies of the model, say for different project teams, but they will be updated with just the changes made by each, rather than downloading full model copies every time. In 2017–2018, co-founder and then CTO, Keith Bentley announced the iTwin platform, which is becoming the common glue embedded in their expansive range of design software. More recently, they also acquired Cesium (founded in 2019), which had wowed the spatial community with its open platform for visualization and digital twins; this will bring more functionality to the iTwin platform.

But the key gee-whiz part of this is that real-world infrastructure projects, including some of the largest worldwide, are working and breathing in a full, digital-twin environments. Nice to see a concept evolve past the buzz-term-hype phase.

Embedded Neural Processing… and More Tilt

This is a two-fer…. An AI update for an essential surveying instrument, and another tilt compensation approach.

First, it is great to see that some of the folks who develop the essential tools of surveying and geospatial disciplines taht are not all bowing to the temptation to make tools dramatically different just to appear cutting-edge. Remember when EV cars first came out and they were designed to deliberately look dramatically different… well, that kind backfired. Some things do not need to be changed, just for the sake of change. The ubiquitous surveyors’ robotic total station (RTS) is part of a surveyor’s essential kit. Its form factor and the way it works were not screaming for change, just improvements in how it does its thing. It was kind of refreshing to see a the first of a new wave of RTS that wasn’t dramatically different… it just got smarter and faster. Embedded neural processing units (NPUs) power no-hype AI to improve things like target recognition and tracking, with plenty of extra horsepower to spare for new functions moving forward.

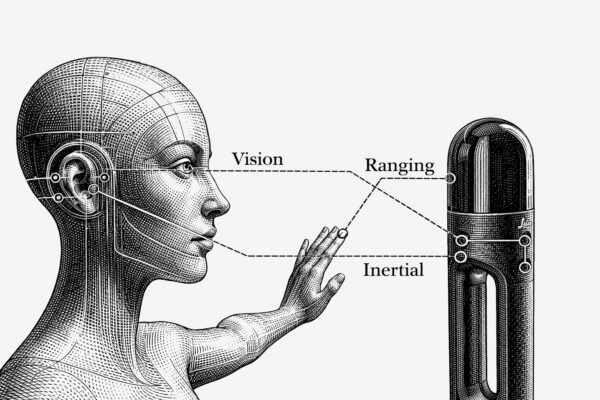

A companion innovation is another form of tilt compensation that does not require GNSS, as work is not always done where there is a sky view. An optical+IMU tilt pole, released in 2022, was the first (and still the only) one of its kind. Dual radios in the handle (of compatible RTS) process a very clever exchange of sensor data to bring high-confidence tilt compensation, even with an inverted pole. I test-drove this and was gobsmacked at how well it worked… a whiz for stakeout as well. They also added auto pole-height and target-identifier functions.

Image and Lidar Stabilization

Again, the spatial integrity of an observed point is no longer defined by the plumbness of a survey pole and orientation/ranging from an instrument alone. That concept of stability through motion has been taken even further. Patterns in images and lidar point clouds can be progressively compared and matched as the instrument moves. There are “visual simultaneous light and measurement” (visual SLAM) approaches, some using real-time imaging, and some using SLAM lidar. And then there are combined solutions leveraging both (e.g., Leica calls this “Grand SLAM”).

Such solutions are being rapidly integrated into a wide range of reality-capture systems: mobile mapping, handheld SLAM, backpacks, drone payloads, and more. And now, we are seeing this on GNSS rovers. An example is the CHC ViLi i1000, which has a small scanning head mounted on it; they call the solution “visual lidar”. The captured patterns are progressively compared as the rover moves, and you can even carry the solution (for a limited time and distance) even where you lose view of the sky. There are different variations on this popping up now among several brands, but this one put a big stamp on the idea.

GNSS rovers are becoming multi-sensor stacks—and why not? If you are running an instrument, why not capture more data incidentally, especially if it does not add substantial cost or time?

Having a Ball With Computer Vision

![]()

Sometimes, something new looks a little odd, or even silly, but that belies just how devilishly clever it is, and how it can solve real-world problems. A great example, is the Leica Geosystems iCON Trades, an oddball-looking little instrument with a companion spotted ball on a pole. Once it was explained to me how it worked, it all made sense, and it was definitely a “Whoa, this is clever!” moment.

There are multiple innovations packed into this system. However, the AI-driven computer vision (CV), that resolves the range and orientation of the spotted pattern on the target ball (in real time), that takes the cake. I test-drove it. Again, gobsmacked… it will be amazing for construction, especially stakeout, and a smaller ball will make structural templating much simpler and more precise. This could be a harbinger for CV implementation in other instrumentation.

Another AI+CV solution shows promise for improved proximity awareness for site safety. You can put multiple cameras on, say, construction or mining equipment, and in real time, the images can be interrogated against a growing (global) database of features it can recognize and use to alert the operator. Again, this is when embedded NPUs make the magic happen—many eyes, a big brain, and a long memory.

Quantum Sensing

While a long way off for most surveying and mapping applications, there are now some quantum-based geospatial solutions, compact and affordable enough, for real-world applications. While things like quantum lidar, cameras, radar, ground-penetrating sensors, and more are still in early R&D phases, the fields (no pun intended) of gravimetry and magnetometry are already moving ahead in the realm of the sub-atomic. Quantum gravimeters, though presently large and costly, show promise for improving geoid modelling and even detecting underground features. Quantum inertial measurement systems (think quantum gyroscopes) are also still large and costly, but show promise for, say, submarine navigation.

Another type of navigation, magnetometric, just broke a price/size/precision barrier. This is not using the global magnetic field (which keeps changing). Instead, it uses “maps” of the magnetic signatures naturally embedded in the crust of the globe—very spotty but unique patterns. A quantum-solutions provider in Australia has built a small device that fits in your hand (though the full support system can be as much as 25 kg) that looks at those unique patterns in stored magnetometric maps (like looking at a QR code) and, as it moves, can determine a location and heading. In head-to-head tests with conventional tactical-grade IMUs, mounted in aircraft and ground vehicles, it outperformed the IMU.

While implementation of other quantum sensors might be a ways off for conventional positioning and reality capture, it is inevitable—just a matter of when.

More Like Humans

The instruments of surveying and mapping have always taken cues from how the human machine perceives and “captures” our world. We orient our perceived world in the same fore-aft axis, left-right, and up-down (plumbed with the vials in our inner ears)—in a relatable manner, just like the conventions of our mapped and digital world (xyz, northing, easting, elevation, etc.). How human visual analyses gauge spatial relationships, size, and distance makes humans the original “visual SLAM” instruments.

While there might be other things going on under the hood of geospatia lintruments with no human sensory counterpart (e.g., GNSS positioning and global Cartesian coordinates), for the most part, the instruments of reality capture (RC) closely mimic human spatial perception and navigation. Again, we are seeing elements of visual SLAM, CV, and neural processing in new RC solutions. And, at the neural-processing level, feature recognition works in much the same manner as it does for humans, by storing in, and recalling from, databases of general and unique patterns.

Developers of humanoid robots load them up with multiple sensor stacks to mimic humans in terms of spatial perception. While they can outpace a human counterpart (for certain tasks), for now, we can say: “Ha! You are just copying us”.

Low Earth Orbit

It was as if the world discovered the term “low Earth orbit” (LEO) less than a year ago. It is definitely a cool buzz term for communications satellite systems, and now positioning, navigation, and timing (PNT) systems. LEO is far from new; such orbits have been used for as long as humans have sent artificial satellites into space. But the current buzz is legit and will shake up the geospatial world—in a good way.

While there are advantages over medium Earth orbit (MEO) traditional GNSS satellites, in terms of certain aspects of geometry and resilience, it is not all roses. For one, LEO satellites cannot stay up there as long; a more rapid replacement cycle is required. And they are also simply signal-based, just like traditional GNSS satellites (though with some advantages).

Folks had visions of turning any and all communications satellites into navigation satellites. But the reality is that most LEO communications satellites are tiny, have limited power budgets, imprecise clocks, and do not have the types of calibrated antennas and orientation capabilities of GNSS satellites. You can’t simply task them to do double duty through wishful thinking.

Traditionally, MEO GNSS satellites are large and very expensive, but they are designed to meet a long list of requirements. In the present day, tech advances make it possible for LEO PNT satellites to be smaller, cheaper, and more tightly focused on specific functions. Cheaper is essential, considering the shorter life-cycles and need for commercial viability.

The approach to reap the benefits of LEO satellite PNT is dedicated satellites. And there is substantive progress in bringing such constellations to fruition, in both the private and public sectors. Just this year, the European Space Agency began broadcasting signals from one of its Celeste demonstration constellations’ (eventual) 11 LEO navigation satellites. GNSS equipment manufacturers have already started logging and analyzing the signals. Other constellation providers are already on task to do the same.

Similarly, a very forward-thinking firm, Xona Space Systems, is well underway in putting together a LEO satellite navigation constellation. GNSS equipment manufacturers, several of which will be familiar names to you, have already partnered.

To be clear, no one is talking about replacing MEO GNSS with LEO GNSS; instead, they will complement each other. Similar signal structures are the prevailing focus, to work together in combined solutions, just as the various MEO GNSS constellations are leveraged. You will likely not need to buy a separate GNSS rover.

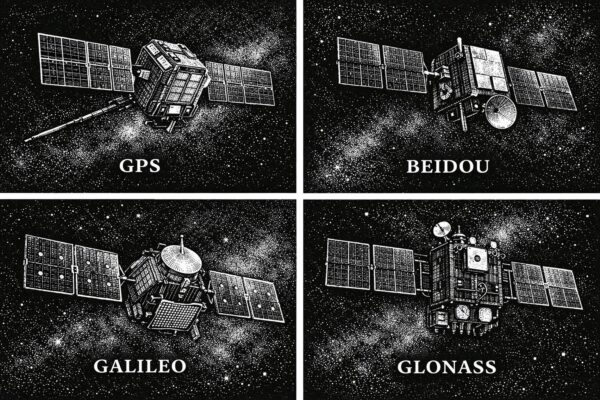

Foundational Technology: Multi-Constellation Comes of Age

In 2020, the Galileo and BeiDou constellations reached full complement (though more satellites have been added to each since). Once GNSS equipment manufacturers received the respective interface control documents (ICDs) for the satellites and signals, they set about enabling devices and rovers to utilize them. It’s not just more satellites, but more signals. L5 has long been envisioned as the magic bullet bringing third-signal solutions to the U.S. GPS (NA VSTAR) constellation. But as it turned out, in the period while L5 was being deployed, other constellations like Galileo and BeiDou implemented third, fourth, fifth, and even sixth signals. L5 is still a big deal, but across multiple constellations, there are multiple big deals.

Often, field users are pleasantly surprised at performance improvements, while others are stunned by how good it is compared to the single constellation days.. This has resulted in an interesting market twist. Let’s call it the “vacuum cleaner sales” scenario. Someone comes to your house with a brand new vacuum cleaner and shows you how well it performs compared to the canister vacuum you inherited from your grandma. You might think that the new brand is the best, instead of just general improvements, over time in related technology. About 15 years ago, there was an almost comical tug-of-war between brands, claiming the best performance under tree canopy. Fierce brand loyalties formed. In many ways, it may have just been a case of folks experiencing a new rover after using a first-generation, single-constellation rover for so long. More recently, many folks’ first experience with the performance boost of multi-constellation is with a mid-price-range rover, as the traditional top-brand models are by comparison often double or triple the price. Certainly, the top-end models may have certain advantages, but mid-range models have gained some serious street cred.

Manufacturers found that many of their legacy receivers did not have the processing horsepower to fully utilize all satellites and signals. Thye needed to bring out new models to take advantage. There has been a whole new wave of receivers with increased (often doubled) processing on board. Having more satellites has meant that some of the old rules of thumb do not necessarily apply. With so many in view, performance under vegetation canopy has improved, and general geometry has improved (at any given time). There was an old (valid at the time) step of repeating observations at a different time of day, or on a different day, to provide a different constellation geometry, to gauge repeatability of a solution. Again, with as many satellites in view as there are today, folks are coming to the realization that such long time splits may not be as beneficial.as they were.

Speed, precision, the ability to work in more environments, and the reliability of GNSS positioning solutions have taken a tremendous leap forward, as has GNSS integration into many of the cool developments listed above.

Foundational Technology: AI Without Hype.

Not new, but improved, bringing significant, positive change. Machine learning has been applied to geospatial applications for decades—for instance, for lidar point-cloud classification. The difference now is neural processing; it can outperform older machine learning approaches by orders of magnitude and help unclog legacy bottlenecks for processing.

Put AI for geospatial applications in the cloud, and/or embedded into field instruments, and you have the foundation for many of the “whoa that’s clever” geospatial innovations we’ve just looked at.

AI is not going away. I’d get into trouble if I inserted a certain New Yorker cartoon, but it showed a boardroom where someone is stating: “We need to rethink our strategy of hoping Ai will just go away…”. Surveyors and geospatial practitioners are benefitting from AI—not just in their hardware and software solutions, but for sundry other tasks, like deed reading, analyzing scanned maps and plans, and even organizing other aspects of their businesses. Yes, yes, you can always go, “but it really messed up this one time, so it’s total garbage.” Or “It put six fingers on that picture of a hand”. If that is your litmus test, you might want to rethink assumptions based on limited anecdotes. Of course, you have to check and verify certain things, but if it can handle a lot of mathematical and straight-up data analyses rapidly, and at volume, it’s a mighty powerful thing that should not be ignored.

Foundational Technology: The Cloud and the Edge

There’s also been cloud processing for decades—albeit a sometimes frustrating and clunky cloud in the early days—but still usable for many purposes. The difference now is that the cloud has grown into something that, with qualifiers, makes it difficult to argue against using in at least some manner. You don;pt have ot go “all in”; pick and choose what works best for your needs. Sure, one can always bring up instances where it won’t work, like remote places with bad comms/interwebs. That’s what edge computing is for. Valid points, but more of an exception than a rule. It would be like saying, back in the early days of drone mapping, that the specter of forgetting batteries made it a flawed technology.

There are choices for every situation (and preferences, or habits). You can always rely on huge, beastly workstations, packing a bunch of servers, with piles of cores, and stacks processors and memory in your office or truck and processing locally. There’s a case to be made, in certain situations, for that legacy approach. However, it can be a moving target. As the processing of reality-capture data and analyses become ever more sophisticated (e.g., automated feature recognition), and put an ever increasing premium on processing power, the pursuit of the perfect beast workstation might be an endless series of false horizons.

Edge computing is powering many field systems for preliminary processing. For mobile mapping systems, there might be a big box in the back seat that gives a head start on processing the deluge of captured data and doing things like on-the-fly anonymization (e.g., removing faces and licence plates from images). There are edge-computing modules, even on drone payloads for imaging and lidar processing. They might not do the whole job, but they let you check data completeness before leaving the site.

Present and future efficiency gains for geospatial applications come with a premium on processing resources. Perhaps there will be some future algorithmic approaches to doing some tasks, with less processing load than at present. However, by the time that is worked out, other tasks and applications will probably raise the load. Processing needs will only increase. The three main options—cloud, edge, and beast—each have advantages and disadvantages, so why not explore combinations of them?

Stacked Solutions

The beauty of what the geospatial sector has done with AI and the cloud is that the implementations improve on legacy methods and gear, and actually solve problems that have been plaguing practitioners in the sector. There is a dramatic difference between what the popular LLM AI tools are doing (with the inevitable stumbling) and what is increasingly powering geospatial applications in a concrete and reliable manner. Steadily, thoughtfully, and responsibly, the geospatial sector is doing remarkable things with these foundational technologies, and without the hype.

And interesting aside. I had noted earlier that a substantial amount of innovation seems to be coming from the upstarts, outside of the legacy list of big-geospatial equipment and software providers. Several legacy big names have not made a big splash in a while. It may be that they simply see value in steady, no-hype updates, or are comfortable in their quality and market share. I hope folks are paying attention to the histories of say, Kodak, Sears, Blockbuster, Blackberry, and others. 😉

It certainly has been an eventful decade, and when it comes to these innovations, we’re only seeing the beginning…

Beep on!

Be the first to comment